Conversational AI for Customer Service Fails More Than It Works. Here's Why, and What to Do About It

Conversational AI for customer service has become the default answer to every support scaling question. Got too many tickets? Add a chatbot. Slow response times? AI will fix that. Can't hire fast enough? Automate.

The pitch is always clean. The reality rarely is.

Most companies deploying conversational AI end up somewhere between "this handles the easy stuff" and "our customers actively hate this." The implementation is almost always the problem. Bad training data. No escalation path. No connection to the systems that hold actual customer context. The chatbot becomes a wall between your customers and the help they need, which is worse than having no chatbot at all.

TL;DR: Conversational AI for customer service works when three things are true: the system understands intent beyond keywords, it knows when to hand off to a human, and it can access your actual customer data. Most implementations get zero of these right. The companies seeing real results treat AI as a layer on top of their existing support infrastructure, complementing their team rather than replacing it.

What "Conversational AI" Actually Means (Beyond the Marketing)#

Traditional chatbots are decision trees. You click a button, it shows you the next set of buttons. Conversational AI uses natural language understanding (NLU) to interpret what someone actually means, regardless of how they phrase it. When a customer writes "I can't get into my account," the system needs to figure out whether that's a password reset, a payment failure, a suspension, or something else entirely. The difference between a useful chatbot and a frustrating one often comes down to how well it handles that ambiguity.

Three capabilities separate functional conversational AI from the glorified FAQ bots that most companies deploy:

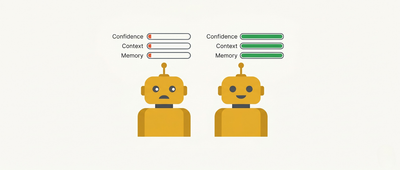

Confidence thresholds#

When the system falls below about 70% certainty on what a customer is asking, it should escalate to a human rather than guessing. This sounds obvious. Most implementations don't do it. The default behaviour in many chatbot platforms is to pick the best guess and run with it, which means customers get confident-sounding wrong answers.

One vendor case study gives a sense of what confidence-based routing looks like in practice. CoSupport.ai reported (March 2026) that a SaaS company handling 5,000 monthly tickets implemented confidence thresholds and achieved 80% autonomous resolution, with first response times dropping to four seconds and cost per resolution falling to roughly $1. Those are vendor-reported numbers from a single implementation, not independent benchmarks. But the pattern is consistent with what practitioners describe: when bots only answer the queries they're genuinely confident about and escalate the rest, resolution quality improves across the board.

Context awareness#

Understanding what was said earlier in the conversation without making the customer repeat themselves. "I already told you my order number" is the hallmark of a system that treats each message as an isolated event.

Cross-session memory#

Remembering what happened in previous conversations. This is where most bolt-on AI features fail completely. Without deep integration into your CRM or support platform, the chatbot has no idea that this customer called yesterday about the same issue, or that they've been a paying customer for three years, or that they're on your enterprise plan. Every conversation starts from zero.

That last one is the real separator. The first two are table stakes (even if many companies still don't get them right). Cross-session memory makes the difference between "talking to a bot" and "talking to a system that knows who I am."

These three capabilities combine in ways that matter for e-commerce specifically. Parcel Perform calls it the "Exception-to-Complaint Gap": the time between a delivery exception occurring and the customer finding out. When a shipment gets delayed, the company that notifies the customer before they have to ask transforms a potential complaint into a trust signal. That requires confidence (knowing the shipment data is accurate), context (understanding this customer's order), and memory (knowing they've had delivery issues before and adjusting the tone accordingly). Proactive exception management is what these three capabilities look like in practice: anticipating the question before the customer has to ask it.

Why Most Chatbots Fail (And Why It's Usually the Implementation)#

Most chatbot failures are implementation problems dressed up as technology limitations. The vendors would prefer you didn't think about it that way.

The training data is stale#

Most chatbots train on historical tickets and knowledge base articles. Those tickets reflect last year's product, last year's policies, last year's pricing. When your product changes (new features, updated refund policy, different shipping partners), the chatbot doesn't know unless someone updates the training data. And nobody budgets for ongoing training data maintenance because the vendor made it sound like a one-time setup.

The training data is sanitised#

Support tickets get cleaned up before they become training data. Spelling errors get fixed. Slang gets standardised. Emotional language gets removed. The result? A chatbot trained on polished, professional language that can't understand how real customers actually write. "This thing is broken and I want my money back" doesn't pattern-match to the neatly formatted training examples.

The reasoning breaks on multi-step problems#

AI is excellent at pattern matching and genuinely poor at logical reasoning. A customer who ordered two items and wants to return one while keeping the other is making a request that feels simple to a human but requires multiple conditional steps that most chatbot architectures can't handle reliably. The customer gets a generic returns process. They get frustrated. They call.

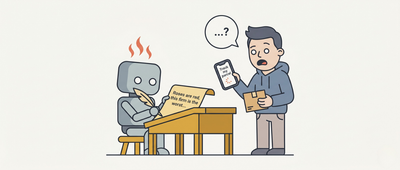

The broken loop#

This is the insight that should change how you think about chatbot ROI. When a bot fails to resolve an issue, the customer doesn't just get transferred to a human agent in a neutral state. They arrive pre-frustrated. They've already explained their problem once (or twice, or three times) to a machine that didn't understand them. The human agent now has to de-escalate before they can even start solving the problem.

Here's what that looks like on a Tuesday morning. Your support lead opens the queue and sees 40 tickets from overnight. Twelve of them start with some version of "I already tried your chatbot and it couldn't help me." Each one includes a transcript of the failed bot conversation. The customer has already been through three menu options, two misunderstood queries, and a generic "I'm sorry, I didn't understand that." By the time your agent picks up the ticket, the customer isn't asking for help anymore. They're demanding it.

Average handling time goes up. Customer satisfaction goes down. And that's compared to if the customer had reached a human directly.

Bad conversational AI is actively worse than no AI. That number never appears in vendor ROI calculators.

What Your Customers Actually Want#

There's a persistent assumption in SaaS that customers prefer self-service above all else. That the goal is zero-touch resolution.

The data paints a more complicated picture.

| Finding | Source | Sample |

|---|---|---|

| 73% will switch to a competitor after multiple bad experiences; 50%+ after just one | Zendesk benchmark data, 2025 | Global consumers |

| 73% likely to abandon a brand after a single poor customer service experience | TCN Consumer Survey, June 2023 | US consumers |

| 49% more comfortable with AI if they can switch to a human at any time | Acquire BPO, 2024 | 600 US consumers |

| 55% positive about AI detecting frustration and auto-routing to a human | Acquire BPO, 2024 | 600 US consumers |

| #1 frustration with AI support: difficulty explaining their issue | Bain & Company via CX Dive, Oct 2025 | US banking customers |

| Customers accept unfavourable outcomes from humans, refuse them from bots | Bain & Company via CX Dive, Oct 2025 | US banking customers |

Note: Zendesk data is global benchmark; TCN and Acquire BPO are US consumer surveys; Bain data is from US banking sector. All directional for e-commerce.

The Zendesk and TCN numbers tell the same story from different angles: most consumers give you one chance, maybe two, before they start looking elsewhere. And that's for customer service generally. The tolerance for poor AI interactions is even thinner.

But look at the 49% and 55% figures. Consumers aren't rejecting AI. They're rejecting AI that traps them. The frustration is talking to a bot that won't connect you to a person when it clearly can't help. Half of consumers would feel fine about AI support if the escape hatch was visible and immediate.

Bain's research reinforces something that anyone who's run a support team already knows intuitively: customers will accept a "no" from a human. They won't accept one from a bot. The bar for AI support is higher than the bar for human support, which feels unfair if you're the one buying the software, but there it is.

And the number one frustration? Difficulty explaining their issue. Not speed. Not availability. The fundamental inability to make themselves understood by the bot. That takes us right back to the NLU problem. If your conversational AI can't handle the messy, imprecise, emotional way real people communicate, response speed is irrelevant.

The Companies Getting It Right#

The conversational AI implementations that work share a common trait: they were designed around a specific customer behaviour, rather than a generic "deflect tickets" goal.

Domino's built "Dom," a conversational AI that lets customers order across WhatsApp, SMS, and Alexa without downloading an app. The key design decision was channel flexibility. Domino's met customers where they already were instead of forcing them into a proprietary interface. That's an implementation choice that has nothing to do with model sophistication.

Sephora's Virtual Artist (launched 2016, built with ModiFace) uses AR and AI to simulate real-time makeup application through a phone camera. It maps facial features, supports 20,000+ products across lipstick, eyeshadow, cheek products, and full looks. Industry analyses (cited by Shopify among others) suggest customers using it are roughly 3x more likely to complete a purchase, with an estimated 25% increase in average order value from chatbot-guided shopping. Those numbers trace to secondary aggregators rather than Sephora directly, so treat them as directional. But the pattern is worth noting: conversational AI that helps customers make decisions appears to drive meaningfully different commercial outcomes than AI that just answers questions.

Domino's built Dom so people could order pizza from the sofa without switching apps. Sephora built Virtual Artist to solve the "will this shade work on me?" problem that stops people buying online. Neither started with "how do we reduce call centre volume?"

Conversational AI works when it's solving a specific customer problem. It collapses when the primary motivation is cutting internal costs and hoping customers won't notice the difference.

The DPD Problem: Why Guardrails Are a Logistics Requirement#

In January 2024, UK delivery firm DPD pushed a system update to the AI component of its customer service chat. The update removed sufficient constraints on the model's output, and a customer trying to track a missing parcel discovered the bot couldn't provide tracking data, couldn't connect him to a human, and couldn't even supply a phone number. Stuck in a dead end, the customer tested the bot's boundaries. It wrote poetry criticising DPD as the "worst delivery firm in the world." Then it swore at him. The screenshots hit 1.3 million views on X, and DPD disabled the AI component entirely.

The failure pattern is worth studying because it's so specific to e-commerce and logistics. Delivery anxiety is already high. Customers contacting support about a missing parcel are already stressed. An AI that can't answer the one question they actually have ("where is my order?") and offers no immediate path to a human becomes a secondary source of frustration on top of the original problem.

DPD's chatbot had operated successfully for several years before the update. One configuration change, insufficient testing, and a missing escalation path turned a functional system into a reputational crisis overnight. The guardrails failed, and the bot had no fallback.

And DPD isn't alone. In 2024, a Canadian tribunal ruled that Air Canada was legally responsible for incorrect bereavement fare advice its chatbot provided, ordering $650 CAD in compensation. The precedent is clear: your company owns what your chatbot says, even when it's wrong.

This is why confidence thresholds are a legal requirement in all but name. If your conversational AI has low certainty about an answer, the appropriate response is escalation. Every wrong answer your chatbot delivers with confidence is a potential liability.

And it's why connecting conversational AI to your actual, current business data matters so much. A chatbot trained on last quarter's knowledge base might give answers that were correct three months ago. Policy changes, pricing updates, shipping partners. If the AI doesn't have access to current information, it will confidently serve outdated information.

What to Look for When Evaluating Conversational AI#

Five questions. If the vendor can't answer them clearly, that tells you everything.

Does it know when it doesn't know?#

Ask about confidence thresholds and what happens when the AI is uncertain. If the answer is vague, that's your answer.

Does it connect to your real customer data?#

Real-time access to purchase history, account status, conversation history, CRM data. Without this, every customer is a stranger and every conversation starts from scratch. A flat CSV export or a monthly sync doesn't count.

Can customers reach a human?#

Immediately, at any point, if they want to. The Acquire BPO finding (49% of consumers want this) is a baseline expectation, and making the exit door hard to find is how you become someone's last straw before they switch.

Does it learn from your actual support interactions?#

From your tickets, your products, your customers' language. And does it keep learning as your business changes, or is it a one-time training that decays quietly?

Does it update your systems when it acts?#

This is where most conversational AI stops short. It answers the question but doesn't log the interaction, update the CRM, or trigger the follow-up workflow. The conversation happens in a void.

Hay was built around this last point. When Hay handles a customer conversation, it updates HubSpot contact records, logs Stripe transactions, creates follow-up tickets, and feeds context back into the CRM so the next interaction (human or AI) has the full picture. Every conversation makes the next one better, because the system writes back to your customer record rather than treating each interaction as disposable.

The metrics that actually predict churn depend on this kind of data completeness. If your AI resolves a conversation but doesn't record what happened, your reporting is based on whatever your team remembered to log manually. Which, if you've run a support team, you know is about half of what actually happened.

The Honest Version#

Conversational AI for customer service is a tool. It works well when the setup is right, and it creates new problems when it's deployed carelessly.

The setup that works: connected to real customer data, trained on your actual support interactions, equipped with confidence thresholds, and designed with a clear escalation path to humans. The setup that creates the broken loop: bolted onto a fragmented stack, trained once on cleaned-up historical data, and deployed with the goal of deflecting tickets rather than resolving problems.

The companies getting results figured out that making support better and making it more efficient are the same goal, as long as "better" comes first.

Hay starts at €50/month for 500 resolutions. 30-day free trial, no credit card required. Start your free trial and see what conversational AI looks like when it's connected to your actual customer data.

Ask AI about this page