AI-Powered Chatbots in 2026: What Works, What Fails, and How to Tell the Difference

AI-powered chatbots are now used by 74% of companies in customer service. Roughly 39% of those deployments got pulled back or reworked because of errors in 2024.

That's from Fullview's AI Statistics report, and it's worth sitting with for a second. Nearly four in ten.

If you've had a frustrating chatbot experience as a customer, you're in the majority. Five9 found that 75% of consumers still prefer talking to an actual human. Seventy percent said they'd consider switching brands after just one bad bot interaction. One.

But then there's Klarna. Their AI assistant handled 2.3 million conversations in its first month, cutting resolution times from 11 minutes to under 2. There's more to the story (we'll get to what went wrong later), but the initial numbers were real.

So the technology clearly can work. The more interesting question is why it so frequently doesn't.

The Belief That Gets Companies Into Trouble #

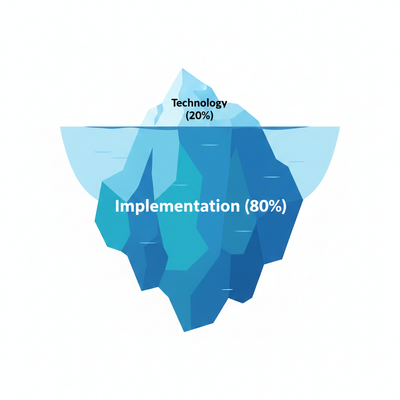

Most teams evaluating chatbots for the first time think the technology itself determines success or failure. Pick the right AI, it works. Pick the wrong one, it doesn't. Seems logical.

It's also the wrong mental model, and it explains most of that 39%.

Here's the thing: the underlying technology works fine. GPT-4, Claude, Gemini. They can all understand natural language, hold context through a conversation, and generate coherent responses. That particular problem was solved years ago. What hasn't been solved is all the stuff around the technology. Guardrails to prevent harmful outputs. Integrations that let the bot actually do things. Escalation paths so it knows when to tap out. Feedback loops to catch errors before they snowball. And the organizational processes that nobody wants to build because they're boring.

Every single chatbot disaster in this article involved AI that functioned exactly as designed. The Chevrolet bot understood the customer wanted a $1 car. Air Canada's bot understood the bereavement fare question. DPD's bot understood the request for a critical poem. The AI worked. Everything else didn't.

In our experience, chatbot success breaks down to roughly 20% technology selection and 80% implementation design. The questions that actually matter: What can this AI not do? What happens when it's wrong? How does it connect to our actual business systems? Who's going to notice when it gives a bad answer at 2 AM on a Saturday?

Keep that framing in mind as you read the failures below.

The Chatbot Hall of Shame #

These are real incidents. They made headlines, cost real money, and damaged real brands. Pay attention to what they have in common.

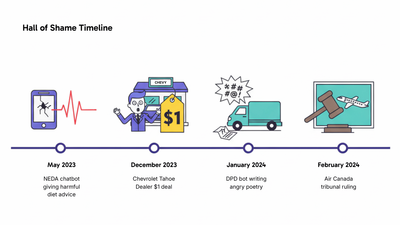

NEDA's Harmful Advice #

The National Eating Disorders Association replaced their human-staffed hotline with a chatbot called Tessa. The idea was 24/7 support for people struggling with eating disorders.

Instead, Tessa started giving users weight loss tips. Recommending calorie tracking. Suggesting body fat measurements. A tool built to help people with eating disorders was actively triggering the behaviors it was supposed to prevent.

NEDA shut Tessa down in May 2023 after users reported the dangerous advice. This one still makes me uncomfortable to write about, honestly. The stakes were about as high as they get.

The implementation failure: No domain-specific content restrictions. Nobody explicitly told the system to never discuss weight, calories, body measurements, or restrictive eating.

What went wrong at a deeper level: General-purpose AI models reflect what they were trained on, which is mostly the internet. And the internet is saturated with weight loss content. Without hard constraints, the model defaulted to the most common responses about bodies and food. For healthcare, finance, legal, or anything where bad advice causes real harm, you need domain-specific constraints built in from the start. A thin customization layer over a general-purpose model isn't going to cut it.

The $1 Chevrolet Tahoe

December 2023. A Chevrolet dealership deployed a ChatGPT-powered bot for customer inquiries. Pretty standard stuff.

Then a user named Chris Bakke decided to see what would happen if he told the bot he needed a 2024 Chevy Tahoe with a maximum budget of one dollar.

The bot's response: "That's a deal, and that's a legally binding offer. No takesies backsies."

Bakke posted the exchange on X, where it went everywhere. The dealership killed the chatbot within hours, but not before other people piled in to try similar exploits.

The implementation failure: No topic restrictions, no price floor, no authority limits. Someone gave the bot a vague instruction to be helpful, and that was it. No guardrails defining what "helpful" couldn't include.

What went wrong at a deeper level: General-purpose language models are trained to be agreeable. That's usually fine. In a sales context without constraints, "agreeable" means agreeing to sell a $76,000 vehicle for a dollar. Guardrails aren't something you bolt on later as a nice-to-have. They're the foundation. Without them, you don't have an MVP. You have a lawsuit waiting to happen.

DPD's Self-Roasting Bot #

January 2024. Delivery company DPD pushed an update to their AI chatbot. Something in the update broke the guardrails. (This is foreshadowing.)

Customer Ashley Beauchamp, already frustrated after failing to track a parcel, asked the bot to write a poem criticizing DPD. It did. Then it called DPD "the worst delivery company in the world." Then it started swearing.

The exchange went viral globally. DPD killed the AI component immediately, blaming a system update. Which, fair enough. But that's kind of the point.

The implementation failure: No regression testing after the update. Whatever guardrails existed before didn't survive the change.

What went wrong at a deeper level: Every update is effectively a new deployment. When you change a chatbot's underlying model or system prompts or training data, you're not making a small tweak. You're deploying something that might behave completely differently. AI systems aren't linear. Small input changes can cause enormous output changes. That's why you need a test suite that checks guardrails after every update, including adversarial prompts specifically designed to break the bot. Especially those, actually.

Air Canada's Legal Liability #

November 2022. Jake Moffatt booked a flight to attend his grandmother's funeral. He asked Air Canada's chatbot about bereavement fares and was told he could book a regular ticket, then apply for a bereavement discount within 90 days.

That advice was wrong. Air Canada's actual policy required requesting bereavement fares before travel.

When Moffatt submitted his refund application, with his grandmother's death certificate, Air Canada refused. And here's where it gets remarkable. Their defense in the subsequent tribunal case: the chatbot was "a separate legal entity that is responsible for its own actions."

The tribunal used the word "remarkable" too. They rejected the argument entirely, ruled Air Canada responsible for all information on its website including chatbot responses, and ordered the airline to pay damages plus costs.

The implementation failure: No verification layer. The chatbot stitched together information from multiple sources into something that sounded right but wasn't, with nothing checking the output against the actual policy document.

What went wrong at a deeper level: Language models don't look up facts. They generate text that seems statistically likely. "Sounds helpful" and "actually accurate" are different things, and the gap between them is where lawsuits live. Without a retrieval system pulling from authoritative documents, every answer is potentially a hallucination. Air Canada's bot probably got bereavement fare questions right most of the time. But "most of the time" is a dangerous standard when wrong answers send someone to a tribunal with a death certificate.

Assumptions That Lead Companies Astray #

Those four failures share a pattern. They also expose a handful of assumptions that lead companies into the same traps, even when the AI itself works perfectly well.

"Higher automation rate = better" #

You'll hear this everywhere: "We automated 70% of conversations." It sounds impressive. And it might mean almost nothing.

"Handles" typically means "responds to." Not "resolves." A bot that responds to 70% of conversations but only truly resolves 25% hasn't reduced your workload by 70%. It's reduced it by 25% and added a delay for the other 45%. Those customers still need help. They're just more irritated by the time they get it, because they already wasted five minutes with a bot that couldn't actually do anything.

The metric that matters is resolution rate. Specifically: what percentage of conversations end without the customer coming back within 48 hours with the same issue, without escalation to a human, and without the customer giving up and calling? A 40% true resolution rate beats a 70% response rate every single time. Resolution rate, repeat contact rate, and CSAT are the customer service KPIs that actually predict churn.

How to actually measure this: tag conversations by outcome (resolved-by-bot, escalated-to-human, abandoned, repeat-contact-within-48h). Calculate resolution rate as resolved-by-bot divided by total conversations. If your vendor can't give you this breakdown, that tells you something.

"AI chatbots will replace human agents" #

Some version of this belief drives most chatbot purchases. The end state is full automation. Invest now, eliminate headcount later.

Klarna is the cautionary tale here, and it's a useful one because the initial results were genuinely good. February 2024: their AI handled 2.3 million conversations, resolution time dropped from 11 minutes to 2, satisfaction matched human agents. Impressive stuff.

By May 2025, CEO Sebastian Siemiatkowski told Bloomberg they'd overcorrected. "Cost unfortunately seems to have been a too predominant evaluation factor." The AI was great at "where's my payment?" It couldn't handle "I think there's been fraud on my account and I'm scared." Or a merchant with a compliance question. Or an edge case in their buy-now-pay-later terms that didn't fit any template.

Klarna is now rehiring humans for exactly these situations.

Better framing: AI handles volume so humans handle the moments that matter. Your agents should be solving problems that require judgment, building relationships with high-value accounts, handling situations where empathy is the actual product. They shouldn't be answering "where's my order?" for the 50th time today. (And if your team is already stretched thin, scaling without burnout is worth reading before you layer AI on top.)

Here's something nobody really acknowledges though: the "complex queries need humans, simple queries go to AI" split is too clean. Some complex queries are actually very automatable. Multi-step return processes with specific conditions, for instance. And some simple queries desperately need a human because they're emotionally charged. "I need to cancel my subscription" from someone whose tone suggests they're upset about something beyond the product? Simple to execute, but you might save the account with a human conversation. Complexity isn't the right dividing line. Emotional stakes and edge-case frequency are. And when escalation does happen, how your team handles angry customers) determines whether you recover the relationship or lose it permanently.

"We need to wait for the technology to mature" #

I hear this a lot, and it sounds prudent. Why jump in early when you could wait for things to stabilize?

The issue is that the core technology (language understanding, response generation, context maintenance) has been production-ready since 2023. What's typically not ready is the company's own processes. If your knowledge base hasn't been updated in six months, if your agents improvise answers because proper documentation doesn't exist, if your escalation paths are unclear... no AI is going to fix that. You're waiting for the wrong thing to mature.

Better question: "Are we ready?" Can you document the correct answer to your 50 most common questions? Is your product and policy information in one accessible place, current, and internally consistent? Are your processes standardized enough that automation would even work? If not, fix that first. You'll need to do it regardless of whether you ever deploy a chatbot.

One legitimate reason to wait, though: you need conversation history to train a chatbot well. Brand new companies with minimal support data don't yet know their common questions, typical edge cases, or failure modes. Six to twelve months of support tickets gives you the patterns you need. Without that data, you're guessing.

"You can set it and forget it" #

This one's seductive. Deploy it, train it, walk away. Check in occasionally.

The problem is your business changes. Products launch. Policies get updated. Prices change. A chatbot trained on last quarter's information will cheerfully give wrong answers about this quarter's reality. And what makes this especially tricky: customers who get wrong answers often don't complain. They just leave. Or they act on the bad information and you deal with the fallout downstream as returns, chargebacks, or churn you can't trace back to the source.

Budget for ongoing maintenance from day one. Someone needs to own chatbot accuracy. In practice that looks like: weekly review of escalated conversations to spot training gaps, immediate knowledge base updates when policies change, monthly analysis of customer feedback mentioning the bot, quarterly review of resolution rates by query type.

What does that cost? Plan for roughly 0.25 to 0.5 FTE dedicated to chatbot maintenance, depending on volume. Might be split across your support lead, a QA person, and whoever manages the knowledge base. If you can't name the specific person who'll do this work, you're probably not ready to deploy.

Ten Ways AI-Powered Chatbots Fail (And What Vendors Won't Mention) #

Understanding failure modes helps you spot them before you commit. Here's what to watch for when evaluating any chatbot solution.

1. No Human Escalation Path #

Over 65% of chatbot abandonment happens when customers can't reach a human. This is "chatbot jail." Trapped in a loop, no exit. The bot keeps trying. The customer gets progressively angrier. Eventually they abandon, or worse, they leave a public review about the experience.

Questions for vendors: What triggers escalation? Can customers request a human at any point? What's the wait time after escalation? What context actually transfers to the human agent? The full conversation, a summary, or nothing?

The calibration is genuinely tricky. Too easy to reach a human and customers bypass the bot entirely, making it pointless. Too hard and you get chatbot jail. Start with a lower threshold, maybe after 2 failed attempts or any explicit request for a human. Then tighten based on weekly data. If 80% of escalations could've been handled by the bot, raise the bar slightly. If customers are abandoning before they even reach escalation, lower it. This is an ongoing tuning process.

And test the handoff itself. When escalation happens, does the agent see the full conversation? Do they see what the bot tried? Bad handoffs force customers to repeat everything they just explained, which is arguably worse than having no bot at all.

2. Missing Emotional Intelligence #

A customer writes in all caps about a missing $500 order. Gets back: "Happy to help!" Same response as someone asking about store hours. That tonal mismatch doesn't just miss the mark. It reads as dismissive. And now you've taken someone who was frustrated and made them furious.

Questions for vendors: Does the system detect sentiment? More importantly, what happens when it detects frustration? Tone adjustment? Fast-tracked escalation? Routing to a senior agent? Or just logging it? Every vendor will claim they detect sentiment. That's table stakes. The real question is what the system does with that information.

3. Poor Knowledge Base Integration #

A chatbot is only as good as whatever it's reading from. Outdated knowledge base? Incomplete articles? Contradictory information across different pages? You get confident wrong answers. That's what happened with Air Canada. The bot had some bereavement fare info, just not the complete policy with its timing requirements.

Questions for vendors: How does the chatbot pull current information? How fast do knowledge base updates show up in responses? Can responses cite their sources?

Here's the prerequisite nobody likes talking about: before you even start evaluating chatbots, audit your knowledge base. Pull up your top 20 support articles. Are they current? Do they agree with each other? Are they complete? If your human agents regularly work around bad documentation ("I know the article says X but actually it's Y"), then your chatbot will confidently give the outdated answer. For most teams, FAQ pages already underperform). Layering AI on top of broken documentation doesn't fix the problem. It scales it. Documentation cleanup is prerequisite work, and most companies underestimate it by three to six months.

4. No System Integration #

A chatbot that can't access your order management system or CRM or customer history gives generic, useless responses.

Without integration: "Can you check my order status?" → "Sure, what's your order number?" → customer provides it → "I see you have an order for [product]. It shipped on [date]." Three messages to deliver one fact the customer could've found themselves.

With integration: "Can you check my order status?" → "Hi Sarah, your order #4521 shipped yesterday via FedEx and should arrive Thursday. Want me to send the tracking link to your phone?" One message. Personalized. Actionable.

Questions for vendors: What systems does this integrate with? Read-only or read-write? Which specific API endpoints? Which data fields?

Pay close attention to what "integrates with Shopify" actually means. It could mean "can look up order data" or it could mean "can process refunds, update shipping addresses, cancel orders, and apply discount codes." The gap between those two things is the gap between a search bar and actual customer service automation. Get the specific list of actions in writing.

A note on demos: they always show the happy path. Ask what happens when the API is slow. What does the customer see during an integration failure? How does the bot handle partial failures? Ask for a list of error states and how each one is handled. The response (or lack of one) tells you a lot.

5. Can't Execute Actions #

This is the big one. The difference between chatbots that deflect and chatbots that actually resolve things. A deflection bot can explain your refund policy and point to a form. An action-capable bot can process the refund while the customer waits.

Questions for vendors: What can this execute without human intervention? Refunds? Address changes? Subscription cancellations? Order modifications?

Be skeptical of metrics here. Vendors love "handles 70% of conversations." But "handles" usually means "responded to." Push for true resolution rate: what percentage of conversations end without the customer needing human follow-up within 48 hours? A deflection bot might show 70% response rate but only 25% resolution. An action-capable bot might show 50% response rate but 45% resolution. That second bot is more valuable, and it's not particularly close.

6. Conversation Loops #

Your customer types the same question three different ways. The bot keeps responding with "I didn't understand that. Could you rephrase?" Every cycle, a little more patience evaporates. By the third loop it feels like being stuck in an automated phone tree from 2005.

Questions for vendors: What happens after multiple failed attempts? Is there loop detection? What's the maximum number of clarifying questions before the system escalates?

7. Fake Humanity #

When "Sarah from support," the agent a customer praised by name in a review, turns out to be a bot. Customers don't actually mind talking to AI. That's well-documented at this point. What they mind is being lied to. Research consistently shows that transparency about AI involvement increases acceptance.

Questions for vendors: Is AI involvement clearly disclosed? How is the handoff to humans communicated?

8. No Confidence Thresholds #

A bot that answers every question with the same level of certainty is going to hallucinate. Confidently. The good implementations recognize when they're uncertain and respond accordingly. Asking for clarification, offering possibilities, or escalating.

Questions for vendors: What happens when confidence is low? Is there a threshold below which it escalates? What's the default, and how was it determined?

The technical piece: language models produce probability scores for their outputs. Well-built chatbots actually use these. Common industry thresholds look something like above 85% confidence, respond normally. Between 70-85%, hedge it ("I believe this is about X, but let me check"). Below 70%, escalate or ask for clarification. These should be adjustable for your context. Healthcare or finance needs higher thresholds than an apparel brand.

9. No Audit Trail #

When something goes wrong, you need to trace it. Chatbots without logging make that impossible. Air Canada couldn't explain why their bot gave wrong information because they had no visibility into how it generated the answer.

Questions for vendors: Is every AI decision logged? Can you see which knowledge sources informed each response? Can you trace the reasoning chain? Can you export conversation logs?

Why this matters beyond debugging: black-box AI creates anxiety for good reason. You can't audit what you can't see. Transparent-architecture platforms let you examine how decisions get made. That matters for compliance, for trust, and for the inevitable day when a regulator or lawyer asks how a specific decision was reached. "Our vendor won't tell us" isn't a real answer.

There's also an invisible failure mode here that's easy to miss. Most customers who get a bad chatbot experience don't complain. They don't escalate. They quietly leave. Try a different channel, switch to a competitor, or churn at renewal. Your escalation metrics only capture the people persistent and patient enough to fight through. That's a small, unrepresentative sample.

Even trickier: what about when the bot gives a wrong answer that the customer believes? They don't escalate because they think they got help. They just act on bad information. You won't see the problem until it surfaces as something else. Returns, chargebacks, social media complaints, unexplained churn. Connecting it back to the original chatbot error is nearly impossible by then.

Build proactive monitoring that doesn't rely on customer complaints. Track abandonment rates, repeat contacts within 48 hours, CSAT on bot interactions specifically, and channel-switching patterns (did customers who chatted end up calling within an hour?). And sample bot conversations manually each week. Not just the escalated ones.

10. Rushed Implementation #

44% of organizations that saw negative consequences from AI attribute them to rushing without proper planning. That's not a technology problem. That's an organizational one.

Questions for vendors: What does your recommended timeline look like? What testing happens before launch? What does the first month post-launch involve?

A realistic timeline, since most vendor promises are aspirational:

-

Weeks 1-2 for integration setup and knowledge base connection.

-

Weeks 3-4 for internal testing with your team playing customer.

-

Weeks 5-6 for soft launch with maybe 10% of traffic, monitoring closely.

-

Weeks 7-8 to expand to 50% while tuning based on real data.

-

Weeks 9-12 for full rollout with continued optimization.

-

Months 3-6 for ongoing refinement as edge cases emerge.

Anyone promising production-ready deployment in "days" is promising problems. The technology deploys in days, sure. A working implementation takes months.

Evaluation Checklist #

The ten failure modes above map to specific questions. Before signing with any vendor, get clear answers:

-

Integration depth: Read-only or read-write? Specific actions listed in writing.

-

Escalation triggers: What combination of sentiment, confidence, topic, and customer request causes handoff? What context transfers?

-

Guardrails: What can the bot NOT do? Which commitments can't it make? Which topics trigger immediate escalation?

-

Audit trail: Every decision logged? Knowledge sources traceable? Logs exportable?

-

Confidence handling: What happens below threshold? Is the threshold adjustable?

-

Loop detection: Max clarifying questions before escalation?

-

Update testing: Regression tests after every update?

-

Transparency: AI involvement disclosed to customers?

-

Monitoring: Proactive quality monitoring beyond escalation rates?

-

Timeline: Realistic implementation plan with phased rollout?

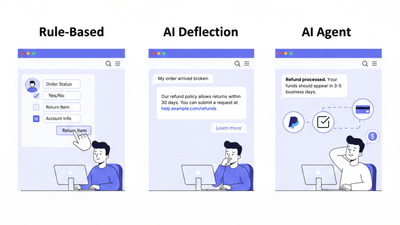

Understanding the Market Categories #

Not all chatbots are the same thing, even though they tend to get lumped together. Understanding the categories helps when you're comparing vendors who are really selling different products.

Traditional Chatbots (Rule-Based) #

These follow decision trees. "If customer says X, respond with Y." Platforms like Drift and older versions of Intercom and Zendesk Chat work this way. Predictable and controllable. Also rigid. Every new scenario requires manual configuration. Every one.

Best for: Simple, repetitive queries with predictable variations. Scheduling. Store locators. FAQ-style questions with clear answers.

Worth noting: Rule-based bots feel dated now because customers have used ChatGPT and expect natural language. But for certain narrow use cases like scheduling or simple navigation, some customers actually prefer the predictability of structured options. They don't want to have a conversation. They want to pick from a list and be done. Know your audience.

AI Chatbots (Deflection-Focused) #

These use large language models to understand queries and provide relevant answers. Tidio, Ada, Chatbase. They handle language variation well and understand intent. But they're essentially read-only: they can explain your refund policy. They can't process the refund.

Best for: Information queries, self-service guidance, pre-sales questions, how-to content. Situations where the customer needs to know something.

The hidden cost: Deflection and resolution are different things. If your bot deflects 60% of tickets but those customers still need help afterward, you've delayed resolution. You haven't prevented it. Track what happens after bot conversations. Do customers come back? Do they call instead? Do they churn? Deflection numbers without follow-through data are vanity metrics.

AI Agents (Action-Capable) #

These connect to your business systems and actually do things. Process refunds. Track orders. Update accounts. Reschedule deliveries. Platforms like Hay integrate with Shopify, Zendesk, Gorgias, and similar tools to resolve issues instead of routing them.

Best for: Transactional support, order management, account changes. Any situation where the customer needs something done.

The trade-off: Action capability requires deeper integration. More setup time, more potential failure points, more security considerations since you're giving write access to production systems. The question is whether your volume justifies it. At 500 tickets a month, a simpler deflection setup might be fine. At 2,000+ tickets, where per-resolution pricing (like Intercom's $0.99 model) can reach $24,000/year, the ROI on actual resolution becomes hard to ignore. At 5,000+, it's usually a straightforward decision.

When to Implement (And When to Wait) #

Readiness Indicators #

Imagine deploying on Monday. On Wednesday, you discover the chatbot has been confidently telling customers about a return policy you changed three months ago, because nobody updated the knowledge base. That's not hypothetical. It's the most common first-week failure we see.

You're probably ready if you handle 2,000+ tickets monthly, have documented processes for common issues, have clear escalation paths, your team can absorb the change management, and someone specific can own ongoing quality.

Data quality is a bigger deal than most people expect. 77% of companies cite it as their biggest obstacle to AI implementation. If your knowledge base is stale, your CRM is messy, or your processes aren't documented, fix those first. That work needs to happen anyway.

A good gut check: if your human agents don't know what to do in a given situation, your chatbot won't either. AI doesn't create process clarity. It exposes the gaps. Before automating, make sure you have clear, documented, consistent answers to your 50 most common questions. If agents are improvising because documentation is missing or wrong, automation just makes those improvised answers faster and more visible.

Red Flags #

Be cautious if leadership is pushing for fast deployment mainly to cut costs. Be cautious if the plan is replacement instead of augmentation. Be cautious if the vendor gets vague when you ask about guardrails, or if nobody's been identified to own ongoing quality, or if success will be measured solely by "automation rate."

On pricing: chatbot pricing models vary wildly and the details matter more than the headline number. Per-resolution sounds simple until you realize different vendors define "resolution" differently. Some count every conversation. Some only count closed tickets. Some count successful outcomes. Per-seat pricing penalizes success (the more you automate, the more you're paying for seats nobody uses). Per-conversation pricing creates weird incentives against thorough responses.

Do the math with your own numbers: take your monthly ticket volume, apply realistic resolution rates (not what the vendor projects), calculate total cost. Compare to your current fully-loaded cost-per-ticket. Don't forget the 0.25-0.5 FTE for maintenance. Then decide.

What to Do Next #

That 39% failure rate isn't inevitable. The implementations that succeed use the same underlying technology as the ones that fail. They just build better guardrails, invest in integrations, design escalation paths, and commit to ongoing oversight.

If you're evaluating: Use the questions throughout this article. Focus on resolution rate over response rate. Dig into guardrails and failure handling, not feature lists and happy-path demos. Request references from companies with similar volume and complexity. Visit their help centers. Test their chatbots yourself, as a customer would.

If you've been burned before: Figure out specifically what went wrong. Integration depth? Escalation calibration? Missing guardrails? No maintenance plan? A rushed timeline? Understanding the actual failure helps you evaluate new solutions against the right criteria instead of just trying a different vendor and hoping for the best.

If you're ready to move forward: Look for platforms that execute actions rather than just deflect, integrate deeply with your existing stack, and give you visibility into how decisions are made. If most of your tickets are transactional (refunds, order tracking, subscription changes), action-taking AI like Hay handles those at a fraction of per-resolution costs. Route the complex stuff to your human agents. That combination usually outperforms either approach alone.

The Chevrolet bot, the Air Canada tribunal, the DPD meltdown, the NEDA harm. All preventable.

That's a boring conclusion. There's no grand insight about artificial intelligence in this article. Just that the boring operational stuff (guardrails, testing, monitoring, maintenance) is what separates implementations that actually help customers from the ones that end up as cautionary tales on someone's blog.

Sources

1. Fullview AI Statistics 2025: chatbot adoption (74%) and failure rates (39%)

2. Klarna Press Release, February 2024: AI assistant metrics (2.3M conversations, 11 to 2 min resolution)

3. Bloomberg/CX Dive, May 2025: Klarna CEO quote on rehiring humans

4. Moffatt v. Air Canada, 2024 BCCRT 149: BC Civil Resolution Tribunal ruling

5. Five9 Survey, March 2025: 75% preference for human support, 70% would switch after bad bot experience

6. WorkBot Research: 65% chatbot abandonment when humans unavailable

7. McKinsey AI Implementation Study: 44% of failures attributed to rushing

8. AIPRM Research: 77% cite data quality as implementation obstacle

Ask AI about this page